Life has outgrown your nervous system

We all need an external nervous system for family life.

At some point in the last year, I realised I had become the family router. Not the noble father-as-provider, oak-tree-in-the-storm kind. I mean the cheap plastic box under the stairs.

Everything came through me. Packets from Gmail, Slack, the calendar. From my wife, my mother, the baby, the contractor, the pantry, the bank. From the grey goo in my head at 5:30 in the morning. And my job was to route all of it: this email belongs to the property project; this invoice needs paying; this half-remembered conversation last night means we need rice, diapers, and wipes; this random thought in the shower might be a blog post or might be nonsense.

The problem with using yourself as the router is that eventually you start dropping packets. You forget the drawer. You forget the meat in the freezer. You forget bin day. You forget the one important email hiding inside two hundred pieces of inbox sewage. And then, worst of all, you start forgetting to be there. Physically present, mentally compiling.

That is the actual problem. Not productivity. Not AI. Not note-taking. Not “agents.” Not the app stack.

Presence.

In Presence in an hour I wrote that the question isn’t “how do I use screens less?” but “how do I reduce my need for screens?” The Screenless Dad isn’t trying to go Amish. I’m not moving into a forest and churning butter unless the butter comes with an API. The goal is to build a life where technology serves presence instead of constantly extracting it. Alfred is my most serious attempt at that.

But I think I’ve been describing Alfred slightly wrong.

I called him our family butler, and that’s true. In my first Alfred post, I wrote that a butler’s job is delegation of attention. Not just doing things, but owning them, noticing what’s wrong, being anticipatory.

That’s the visible layer. But the deeper thing is this:

I’m building Alfred because my responsibilities have outgrown my nervous system.

Or, more precisely: I’m trying to stop using my brain as a codec for my life.

Let me explain.

The world doesn’t send you tasks

The world doesn’t send you neat little tasks. It sends you artifacts. An email. A Slack message. A calendar invite. A meeting transcript. A voice note. A receipt. A bank transaction. A photo of a broken drawer. A sentence from your wife while you’re both half-asleep and the baby is making goat noises in the middle of the bed.

None of these arrive labelled correctly. The email doesn’t say “Hello David, I am a medium-priority administrative commitment related to your household finances and I should become a task by Friday.” The meeting transcript doesn’t say “there were three decisions, one contradiction, and one promise you made while nodding too confidently.” The calendar event doesn’t say “this starts at 2pm but traffic means your actual deadline is 1:15pm, and if you’re still at the laptop at 1pm someone needs to physically intervene.”

The world sends messy artifacts. Then your brain has to decode them. That hidden decoding work is what destroys me, because every morning I have to rebuild the context of my life from fragments.

The human brain is very good at some things. It can read a baby’s expression, make weird creative leaps, pray, love, detect that something is off in a room before anyone has said anything, connect two ideas from ten years apart and build a company around them.

It is not designed to be the always-on integration layer between Gmail, Slack, calendars, finances, health data, project management tools, family logistics, and home maintenance.

Yet that is exactly what modern life asks of it.

Congratulations: you’re an ape with an iPhone, a mortgage, a calendar, a baby, and seventeen SaaS tools.

You are not meant to be a codec

A codec is a thing that encodes and decodes: it takes one kind of signal and turns it into another. An audio codec turns sound into compressed digital data and back into sound. A video codec turns moving images into files and back into moving images.

For most of my adult life, I’ve unknowingly used my brain as the codec between the artifacts of my life and the actions my life requires.

An email comes in, and my brain decodes it: this is from that person, it refers to that project, it implies this task, it affects that deadline, it should be answered after I check that document, it probably matters but not now.

A meeting happens: this person is worried, that thing is blocked, this decision was made, this promise was implied, this tension wasn’t said out loud but it’s there.

My wife says something while I’m cooking: we’re out of rice; add it to the shopping list; if we’re ordering rice, also check diapers; if we’re checking diapers, check wipes; and why is there a single sad onion in the pantry?

This is the work. Not typing. Not clicking. Not “being productive.” Holding together all the threads of your messy life like Loki holds the timelines together.

The work is decoding messy life artifacts into meaning, then re-encoding that meaning into tasks, reminders, decisions, notes, schedules, messages, and actions.

That’s what I want Alfred to do. Not because I want to become a cyborg productivity bro who drinks Soylent and has a Notion dashboard for his emotions. Because I want to make pancakes with my daughter without mentally parsing an invoice. And because I prefer Huel to Soylent.

Artifacts are keys

A document doesn’t contain meaning by itself. A spreadsheet doesn’t. An email doesn’t. A code file doesn’t even do anything by itself. It needs an interpreter, a compiler, a runtime, libraries, an environment, and some poor developer swearing at logs at night.

Meaning is reconstructed, so every artifact needs a decoder.

A text from my wife that says “the upstairs problem is back” means almost nothing to a stranger. Heat? Cold? Mould? Plumbing? Ghosts? But to me it might unlock an entire mental folder: the upstairs gets too hot in summer and too cold in winter, the attic insulation might be wrong, we talked about the roof cavity, I need to find the notes from last time. The sentence is tiny. The meaning is large. The sentence is a key, not meaning. And the key only works because I already have the background model.

This is true for everything. A CSV is a key. An Obsidian note is a key. A family calendar, a shopping list, a meeting transcript, a voice note, a photo, a book, a report — all keys. The artifact is not the full reality; it’s a compressed representation that lets the right receiver reconstruct the right thing.

artifact + decoder + background model = reconstructed meaning

The reason couples can speak in fragments isn’t that marriage improves language. Marriage builds a shared decoder. The reason teams develop acronyms isn’t that they hate normal people (though sometimes), but that shared context makes communication cheaper. The reason codebases become illegible to outsiders is that half the meaning lives in conventions, architecture, weird historical decisions, and one Slack thread from 2021 nobody can find.

The artifact is just the key. The model does the work.

My brain has been doing the model work

For most of my life, I thought my problem was memory.

I forget things. So I tried memory tools; notes, todo apps, calendars, alarms, project management systems, second brains, third brains, possibly a fourth brain but I forgot where I put it.

But my actual problem isn’t memory. It’s model maintenance.

My brain is constantly trying to maintain a model of everything I’m responsible for: my family, my work, my health, my finances, my home, my commitments, my relationships, my projects, my promises, my ideas, and my future self (who I apparently hate, because I keep giving him undocumented tasks).

Every time I check a tool, I’m not just checking a tool. I’m rebuilding the model.

What changed?

What matters?

What did I promise?

What’s now urgent?

What can be ignored?

What belongs to which project?

What should I tell my wife?

What should become a task?

What should disappear forever?

That reconstruction cost is brutal. Especially with ADHD. Meds help. Routines help. Fewer screens help. But the underlying architecture is still insane.

A modern parent’s life is too fragmented for one biological attention system.

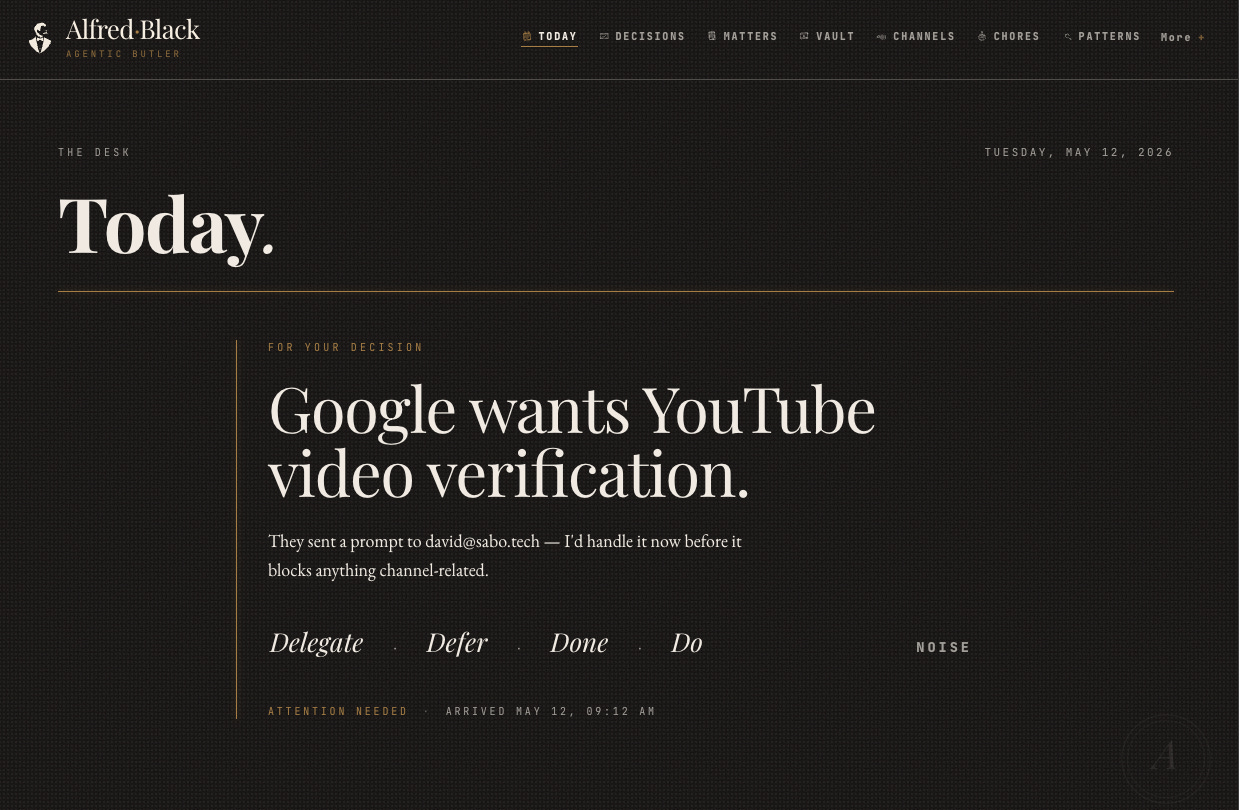

So Alfred isn’t just “AI that helps me.” Alfred is a second attention system. A private one. A durable one. A boring one, ideally. I don’t want Alfred to be dazzling, I want him to notice that the email from the contractor belongs to the house matter, update the task, and only interrupt me if something actually needs my judgment.

I want boring competence. Jeeves with an audit log.

Alfred isn’t a chatbot

Most people misunderstand AI assistants. They imagine a chat window. You type something, it replies. You type again, it replies again.

That’s fine, but it’s not a butler.

That’s a very articulate vending machine.

A butler doesn’t wait for you to prompt every single thing. A butler knows the house. He knows the routines. He knows what’s normal. He notices what changed. He knows when not to bother you, and when to bother you immediately.

This is why Alfred needs a vault where it turns emails, meetings, calls, notes, documents, and ambient recordings into a structured, interconnected vault of everything that matters. Explicitly not just an app or dashboard you maintain. It meets you across the channels you already use.

That isn’t just a UX detail. Because the moment Alfred becomes “another app I need to check,” Alfred has failed. The whole point is to reduce the number of places my nervous system has to go hunting for context.

In the current architecture, the vault is a directory of Markdown files: people, matters, decisions, events, tasks, conversations, each one its own file, linked with wikilinks. I like that it’s Markdown. It’s plain. Readable. Ownable. Diffable. Portable. I want Alfred’s memory to be something I can inspect. I don’t want my life trapped inside some VC-backed chatbot’s vibes database. I want files. I want undo.

I want to know why Alfred did something.

Alfred turns noise into signals

The world emits noise. Some of it matters; most doesn’t. A newsletter arrives, a calendar event changes, a GitHub notification fires, a meeting transcript is saved, an Omi capture catches something I said while cooking, a Gmail thread updates, a random automated email coughs into the inbox.

If all Alfred does is collect everything, Alfred becomes another hoarding problem. A digital garage. So the real job isn’t collection — it’s signal extraction.

That’s the Signal Layer where raw stream events become routed signals. This is what agents miss today:

Monitor all the surfaces of my life and then silently transform that enormous raw data into signals and file them to the right matters and tasks I have going on in my life.

That’s what you and me are doing. That’s what being the router means. You accepted it as a normal part of adult life.

I reject it.

Here’s how this looks like as an engineering solution:

A stream event is the raw thing that happened; a signal is Alfred’s conclusion about what it means, what matter or task it concerns, what effect it should have, and how confident he is. That distinction is everything.

Raw event: an email arrived. Signal: about the kitchen renovation; the cabinet supplier confirmed the site visit; the related task should be marked scheduled. Confidence: high.

Raw event: I said something in the kitchen while wearing an ambient recorder. Signal: David committed to cleaning the pantry on Sunday; create reminder. Confidence: medium.

Raw event: calendar event changed. Signal: the meeting moved earlier and now conflicts with school pickup; escalate. Confidence: high.

This is why Alfred isn’t mainly a storage system. He’s a semantic filter. He converts the raw artifacts of life into meaning and action. Which is exactly what my brain has been doing manually (badly).

Matters are how Alfred learns what a life is made of

Humans don’t experience life as a flat task list. We experience it as ongoing concerns. The house. The family. The baby. Health. Money. A client. A product. A renovation. A trip. A conflict. A project that refuses to die.

Alfred calls these matters. A matter isn’t a task. A task has a deadline. A matter has an arc. They are long-running areas of life with connected tasks, decisions, and events attached to them: a property development, a health restart, household finances.

They unfold over time.

This is exactly how attention already works. My brain has matter-like objects. When I hear “contractor,” it routes to the house. “Ritalin” routes to health. “Invoice” routes to money. “Post idea” routes to writing. “Rice” routes to the shopping list, unless we’re talking about geopolitics, in which case I’ve made a wrong turn somewhere.

Alfred needs to learn the same routing. That’s what it means to become useful: not to answer trivia, not to generate slop, but to know what part of my life something belongs to.

Once Alfred understands matters, he can steward them. Like a steward it watches one matter and asks: did anything change here?

It watches for signals, decides whether the matter or its tasks need to change, and either applies the change or surfaces a proposal.

That’s the dream. Not “hey AI, summarise my emails” but “watch the house project for me.” Not “hey AI, make me a todo list” but “notice when one of my responsibilities changes state.”

A chatbot answers. A butler attends.

The butler must earn trust

Obviously, I don’t want software freestyle-managing my life because it had a spicy inference. There’s a reason “move fast and break things” wasn’t invented by a father holding a baby. When the thing you break is a demo environment, fine. When the thing you break is your calendar, your bank account, your marriage, or your relationship with your mother, less fine.

So Alfred needs progressive autonomy. That’s served by Alfred’s intuition: Alfred observes how I route things, turns repeated observations into instincts, and uses a trust gradient to decide whether to act silently or escalate. Day one Alfred asks about everything. Day thirty he can handle routine decisions because he’s seen the pattern enough times.

Not full autonomy. Earned autonomy.

At first: “David, this email looks like it belongs to the property matter. Should I file it there?” Then: “David, I’ve seen seven emails from this sender go to the property matter. Should I make that automatic?” Then: “Filed under property. No action needed.” Eventually: nothing — which is the best notification. The best notification is the one that never happens because the thing was handled correctly and safely without needing my attention.

This is what most tech gets wrong. It wants to impress me. I don’t want to be impressed. I want to not think about bin day.

LLMs aren’t the brain

This is also why I think most AI architecture is wrong. People put the LLM in the centre and ask it to be everything. Memory. Reasoning. Truth. State. Interface. Executor. Judge. Database. Therapist. Intern. Oracle.

A silicon god with latency.

That’s a terrible idea.

LLMs are extremely useful, but not because they’re magical brains. They’re useful because they’re semantic transcoders — good at turning one kind of artifact into another.

Messy meeting transcript into decisions and tasks. Voice note into plan. Email thread into status. Natural language into SQL. Code into explanation. Explanation into code. A chaotic thought into a structured note. A structured note into an action.

That’s the job. The LLM is the translator between messy human life and structured machine state. But state shouldn’t live in the LLM. State should live somewhere durable.

In Alfred, that’s the vault. The LLM helps interpret. The vault remembers. Workflows act. Logs explain. Trust controls autonomy. I remain responsible.

That architecture matters, because I don’t want an AI that confidently hallucinates my life. I want a system that can say: “I know this because it’s in the vault. I did this because this signal matched this instinct. Here’s the evidence. Here’s the undo button.”

Much less glamorous than AGI but more useful.

The real enemy isn’t screens

This is why Alfred belongs on Screenless Dad. At first glance, building an AI butler sounds like the opposite of screenless living. Aren’t I adding more technology? Yes. But the Screenless Dad was never about hating technology. It’s about renegotiating the relationship.

The Screenless Dad isn’t buying an old Nokia and pretending it’s 1999. The goal is living online while screenless; engineering an environment where screens are used with intent, not reflex. The enemy isn’t the screen itself.

The enemy is the screen becoming the interface to responsibility.

I check the phone because I need information. But the information is mixed with traps. I open Gmail to check one thing, see five other things: one creates anxiety, one creates curiosity, one creates a task, one creates shame.

Then I open Slack. Then the calendar. Then I remember something. Then I forget why I started. Then I need a break from the overwhelm caused by seeking clarity, so I reach for stimulation. And now I’m gone. Body in the room. Face turned away.

This isn’t because I lack moral fibre. It’s because modern responsibility is routed through adversarial interfaces.

Alfred’s job is to separate responsibility from extraction. Get the signal without the casino. Tell me what matters without making me swim through everything that doesn’t. Protect the face-turning.

This is the theology of it for me. Presence means turning your face toward the people you love. Modern software keeps asking you to turn your face away. Alfred is my attempt to make the machine look at the machine, so I can look at my family.

The highest use of AI isn’t productivity

I know the obvious pitch. Save time. Automate tasks. Get more done. Inbox zero. Calendar optimisation. Agentic workflows. Whatever. That’s all fine, but it’s not the point. The point isn’t to squeeze more output from my body. It’s to stop spending my best attention on reconstructing context from broken systems.

I don’t want AI to help me become busier. I want it to help me become less interruptible. Less scattered. Less haunted by forgotten promises. Less dependent on screens for memory. Less likely to miss my daughter’s face because I’m rebuilding a project plan in my head.

The best AI shouldn’t make you feel like Tony Stark. It should make you feel like your house is finally in order. Quietly. Without drama. Without applause. The bins went out. The email was filed. The calendar was fixed. The task was created. The contradiction was noticed. The weekly brief arrived. The pantry reminder appeared. The thing that would have become a fight became a handled note in the background.

This is why the butler metaphor works. A butler isn’t valuable because he can chat. He’s valuable because he carries the household in his head. Alfred carries the household outside mine.

What I’m actually building

So here’s the cleanest way I can say it:

Alfred is a private semantic nervous system for my family.

He ingests the artifacts of life: emails, meetings, calls, notes, documents, ambient recordings, calendar events, tasks, health signals, financial data, random things I say while cooking. He decodes them. What is this? Who’s involved? What matter does it belong to? Is there a commitment? A contradiction? A decision? An action? Or is it noise?

He stores the reconstructed meaning in a durable world model: people, projects, matters, events, tasks, decisions, assumptions, constraints, contradictions, instincts. He watches the things that have arcs — the house, health, family logistics, work, money, content, projects. He acts only when trust has been earned. He interrupts me only when my actual human judgment is required.

That’s the thesis. The world has become too fragmented for one person’s working memory.

The old solution was discipline. Check every app. Maintain every list. Remember every promise. Read every email. Build the dashboard. Do the weekly review. Keep the second brain updated. Be more organised. In short, the old solution was:

“Try harder, monkey.”

I’m tired of that. My brain isn’t a failed productivity system. It’s a brain. It should be used for love, judgment, creativity, prayer, play, taste, risk, fatherhood, and building weird things.

Not as middleware between Google Calendar and the pantry.

To prompt less and live more

Prompting is work. It means I remembered the thing, opened the interface, provided context, knew what to ask.

Prompting means I became the router again.

A true butler doesn’t need to be prompted for everything. It shares enough background model with you that small instructions become large actions.

A true butler knows the house.

That’s what I want. Not because I want to escape responsibility, quite the opposite.

I want to meet my responsibilities without letting them colonise my attention. I want to remember without carrying everything, without checking every tool.

I want to be reachable without being constantly available.

I want the machines to deal with the machine layer.

I want my nervous system back.